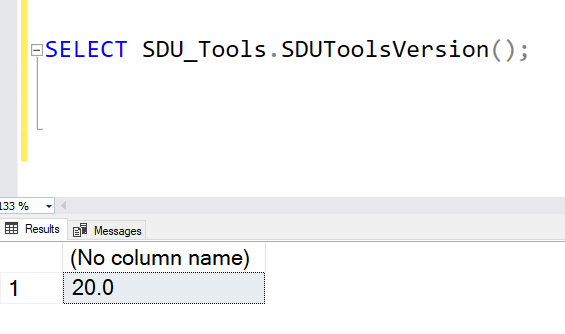

SDU Tools: Version 20 is out the door and ready for download

I’m pleased to let you know that version 20 of our free SDU Tools for developers and DBAs is now released. It’s all SQL Server and T-SQL goodness.

If you haven’t been using SDU Tools yet, I’d suggest downloading them and taking a look. At the very least, it can help when you’re trying to work out how to code something in T-SQL. You’ll find them here:

https://sdutools.sqldownunder.com

Along with the normal updates to SQL Server versions and builds, we’ve added the following new functions:

2020-10-22