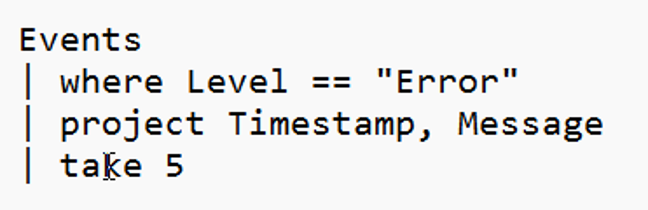

Fabric RTI 101: KQL Filtering

When we start working with large volumes of data, one of the first and most important things we need to do is filter. Filtering is all about reducing noise — narrowing down the data that we really need so that we only keep the rows that are relevant to our analysis or our business needs.

In most systems, rows contain a mix of everything: normal activity, background data, edge cases, and sometimes even junk messages. If we tried to process or visualize all of it, our queries would slow down, and costs would rise, and the signal we care about would get buried in the noise. Filtering allows us to focus only on what matters.

2026-06-10