Tech Career for Life: Show 4 with Guest Ron Dunn is now published!

Show 4 with Guest Ron Dunn

2026-05-12

2026-05-12

When we work with real-time data in Fabric, not everyone wants to or needs to write KQL or SQL. That’s where visual queries come in.

They provide a no-code interface that lets you build streaming data queries using drag-and-drop actions instead of typing code.

You start with a data source — perhaps an eventstream, a table, or a KQL database — and then visually add steps like filters, joins, or aggregations. Each transformation appears as a node in a flow, so you can see exactly how the data changes from one step to the next. The design is similar to what you might have seen in Power Query or Dataflows, but it’s designed specifically for real-time and streaming data rather than batch processing.

2026-05-11

The default action when performing a backup is to append to the backup file yet the default action when restoring a backup is to restore just the first file. This has never made sense to me.

I constantly come across customer situations where they are puzzled that they seem to have lost data after they have completed a restore. Invariably, it’s just that they haven’t restored all the backups contained within a single OS file.

2026-05-10

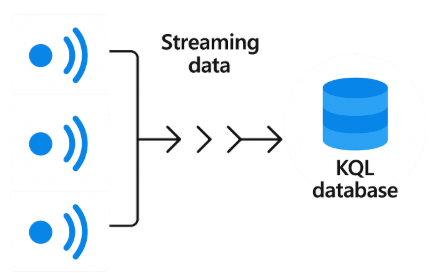

When we talk about KQL databases in Fabric, we’re referring to databases that are specifically optimized for high-volume, time-series, and log-style data. These databases are designed around the kinds of workloads that come from telemetry, sensors, applications, and services that continuously generate events.

Rather than being general-purpose like a relational database, a KQL database is built to handle append-only, event-driven data at scale — often millions of rows per second. The architecture is different from what we see in traditional SQL databases. Data in a KQL database is typically stored in compressed, columnar form, which makes it extremely efficient to query across large time ranges or to aggregate over millions or billions of records.

2026-05-09

I’ve recently been writing about the need for stored procedures to have contracts . Temporary tables add another dimension to that discussion.

Tempory tables are visible within the scope where they are declared but also in sub-scopes. This means that you can declare a temp table in one stored procedure but access it in another stored procedure that is executed from within the first stored procedure.

There are two reasons that people do this. One reason is basically sloppy code, a bit like having all your variables global in a high level programming language. But the more appropriate reason is to avoid the overhead of moving large amounts of data around, and because we only have READONLY table valued parameters.

2026-05-08

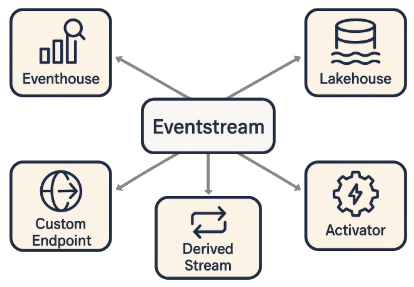

Once your Eventstream is processing data, the next key step is deciding where those events should go — their destinations.

Eventstreams are designed to be flexible: you can route events to multiple destinations at once, both inside Fabric and to external systems. Let’s look at the main ones.

First is the Eventhouse, which provides a high-performance, KQL-based analytical store. It’s ideal when you need to query and visualize live data in real time — for example, detecting anomalies or monitoring live operations. Because it uses KQL, it integrates tightly with Real-Time Dashboards and KQL Querysets in Fabric.

2026-05-07

A few days ago, I wrote about SQL CLR and how I don’t normally use it now, but if I did, which types of objects make sense for it. I briefly mentioned user-defined data types but today, I wanted to call out another limitation of these that I’d like to see addressed (if we keep on using SQL CLR).

Early versions of the user-defined data types in SQL CLR had a limitation on size, where they needed to be serializable within 8KB. That limit is now long gone and so the ability to define new data types using SQL CLR integration was now almost at a usable level, apart from one key omission: indexes.

2026-05-06

When it comes to designing an Eventstream pipeline in Fabric, the process generally follows a clear, three-step flow. First, you start with the inputs — the data sources. This might include Kafka, Event Hubs, IoT Hub, or other streaming systems. At this stage, you define the connections and schemas so Fabric knows how to interpret incoming events.

The second step is where you apply transformations. These are the operations that make raw data more usable and more valuable. You might apply filtering to reduce noise and drop irrelevant events. You might use mapping to rename fields, adjust types, or flatten JSON into something cleaner. And you might use routing to branch different types of events to different destinations. Together, these transformations ensure that events are shaped, cleaned, and directed properly before they move downstream.

2026-05-05

It was great to catch up with Jess Pomfret today and to have her on a SQL Down Under podcast.

Jess is a Data Platform Engineer and a dual Microsoft MVP. She started working with SQL Server in 2011, and she says she enjoys the problem-solving aspects of automating processes with PowerShell.

Jess also enjoys contributing to dbatools and dbachecks, two open source PowerShell modules that aid DBAs with automating the management of SQL Server instances.

2026-05-05

I’ve recently been talking to clients about SQL CLR objects. When these were first introduced in SQL Server 2005, many of us had high hopes for them. SQL Server has never been great in regard to extensibility and this provided some way to extend the product.

Nowadays, I avoid SQL CLR. And that’s a real pity. But it’s no longer supported in Azure SQL Database, apart from the system CLR objects of geometry, geography, and hierarchyid. (Note: I’m also not a fan of hierarchyid). I need to use extensibility methods that are available in the different environments that I work in, and Azure SQL Database is one of those. The same applies to Fabric SQL Database.

2026-05-04