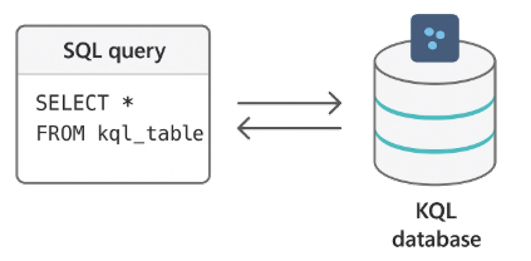

Fabric RTI 101: Basic KQL query syntax

Let’s look at how a basic KQL query is structured.

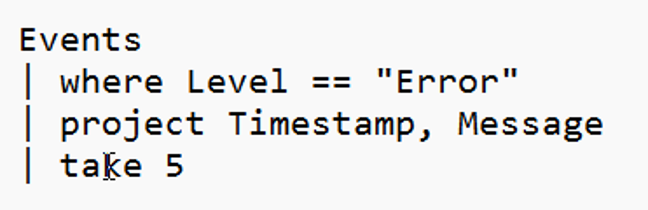

Every KQL query starts with a table — that’s your starting dataset, like Events, Logs, or Telemetry. From there, you build a pipeline of operations using the pipe (|) symbol, which passes the output of one operation into the next.

The most common first step is to filter the data using the where operator. It works just like a SQL WHERE clause but uses a more natural, functional syntax. For example:

2026-06-08