SDU_FileSplit - Free utility for splitting CSV and other text files in Windows

When I was doing some Snowflake training recently, one of the students in the class asked what utility they should use on Windows for splitting a large file into sections. They wanted to split files for better bulk loading performance, to be able to use all available threads.

On Linux systems, the split command works fine but the best that most people came up with on Windows was to use Powershell. That’s a fine answer for some people, but not for everyone.

Because the answers were limited, and people struggled to find a CSV splitter, I decided to fix that, and create a simple utility that’s targeted as exactly this use case.

SDU_SplitFile

SDU_SplitFile is a brand new command line utility that you can use to split text files (including delimited files).

Usage is as follows:

SDU_FileSplit.exe <InputFilePath> <MaximumLinesPerFile> <HeaderLinesToRepeat> <OutputFolder> <Overwrite> \[<OutputFilenameBase>\]

The required parameters are positional and are as follows:

InputFilePath is the full path to the input file including file extension MaximumLinesPerFile is the maximum number of lines in the output file HeaderLinesToRepeat is the number of header lines to repeat in each file (0 for none, 1 to 10 allowed) OutputFolder is the output folder for the files (it needs to already exist) Overwrite is Y or N to indicate if existing output files should be overwritten

There is one additional optional parameter:

OutputFilenameBase is the beginning of the output file name - default is the same as the input file name

Example Usage

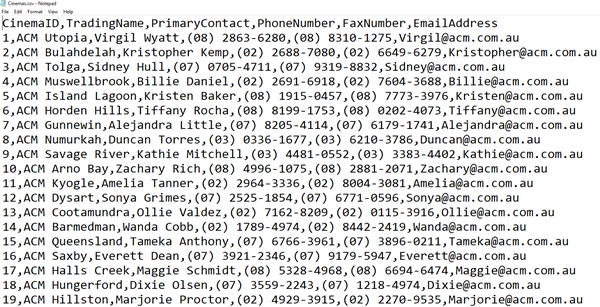

Let’s take a look at an example. I have a file called Cinemas.csv in the C:\Temp folder. It contains some details of just over 2000 cinemas:

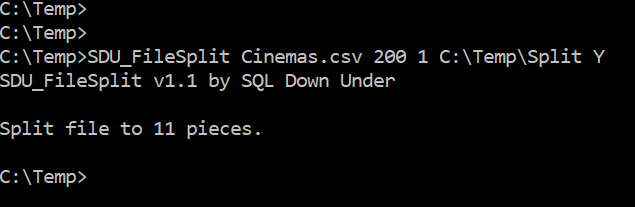

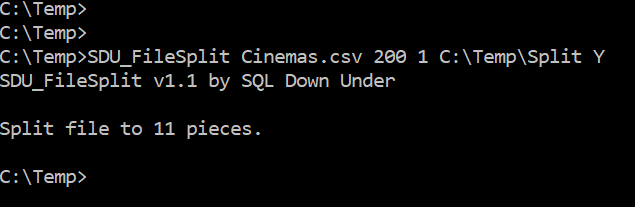

I’ll then execute the following command:

This says to split the file Cinemas.csv that’s currently in the current folder with a maximum of 200 rows per file.

As you can see in the previous image, the CSV has a single header row. I’ve chosen to copy that into each output file. That way, we’re not just splitting the data rows, we can have a header in each output file.

We’ve then provided the output folder, and also said Y for overwriting the output files if they already exist.

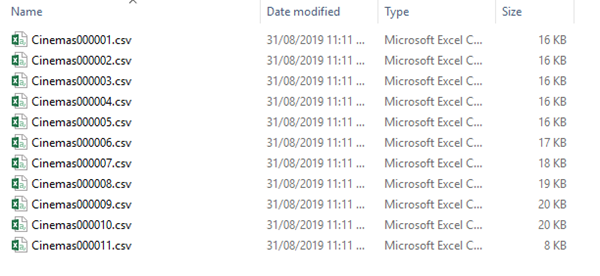

And in the blink of an eye, we have the required output files, and as a bonus, they’re all already UTF-8 encoded:

Downloading SDU_FileSplit

It’s easy to get the tool and start using it. You can download a zip file containing it here.

Just download and unzip it. As long as you have .NET Framework 2.0 or later (that’s pretty much every Windows system), you should have all the required prerequisites.

I hope you find it useful.

Disclaimer

It’s 100% free for you to download and use. I think it’s pretty good but as with most free things, I can’t guarantee that. You make your own decisions if it’s suitable for you.

2019-08-23