SDU Tools: Script SQL Server Table

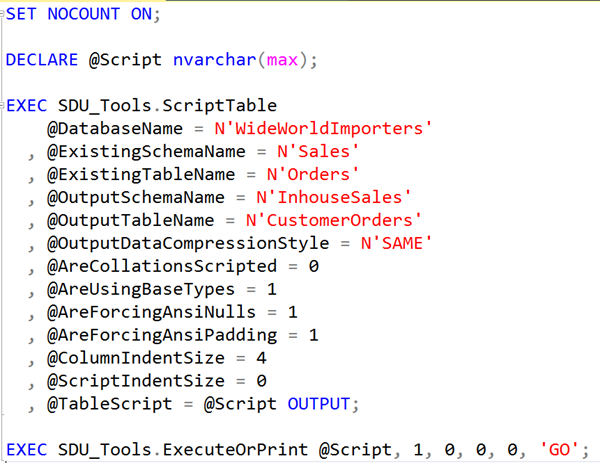

In our free SDU Tools for developers and DBAs, we’ve added a lot of scripting tools. The tool that I’m describing today is one of the most sophisticated tools in our scripting options. It’s ScriptTable.

It’s very flexible. For example, it can change the name of the table, or the schema that it’s in. It can force ANSI_NULLS and ANSI_PADDING on or off. It can change user-defined data types to their base types, change compression strategies, and more.

2019-01-30