ADF: Fix - The required Blob is missing - Azure Data Factory - Get Metadata

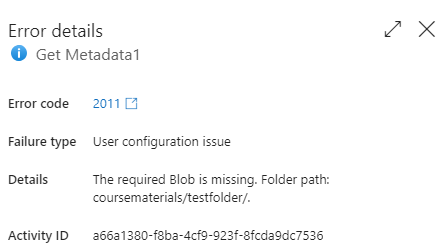

I was doing work for a client that uses Azure Data Factory (ADF), and noticed an unexpected Error code 2011 from a Get Metadata activity.

The pipeline was using a Get Metadata activity to read a list of files in a folder in Azure Storage, so that it could use a ForEach activity to process each of the files. Eventually it became clear that the error occurred when the folder was empty.

Storage Folders

Originally with Azure Storage, folders were only a virtual concept and didn’t really exist. You couldn’t have an empty folder.

So what we used to do back then is to place a file that we called Placeholder.txt into any folder as soon as we created it. That way the folder would stay in place. It’s a similar deal to folders in Git i.e., you can’t have a folder without a file.

It also meant that after using a Get Metadata activity to find the list of files in a folder, we’d have to use a Filter activity to exclude any files like our placeholder file that weren’t going to get processed.

Hierarchical Namespaces

But that all changed with the introduction of hierarchical namespaces in Azure Storage. Once you enabled this for an account, folders were first class citizens and could exist whether or not they contained files.

This is a good thing as it also made access to files in a folder much faster.

It was part of the support that was needed to use Azure Data Lake Storage (ADLS) Gen 2.

So what was wrong?

The issue in this case, was that the perception was that the placeholder file no longer needed to be there.

Everything else worked, but, by design, if you try to get the metadata for an empty folder, even in a hierarchical namespace, error 2011 is thrown.

That seemed really odd to me.

The fix is in

I talked about this to members of the product group and they gave me the details of what was going wrong.

Even though you can connect to Azure Storage using the Azure Storage Blob connector, even if you’ve enabled hierarchical namespaces, you’ll still get an error if you try to list items in an empty folder. Can’t say I love that, but it is what it is.

The trick is that you must use the Azure Data Lake Storage (ADLS) Gen 2 connector instead of the Azure Storage Blob connector. If you do that, you get back an empty ChildItems array, just as expected. No error is thrown.

Modifying existing code

Now, changing the type of connector is a bit painful as you also have to change the type of dataset, and that could mean unpicking it from wherever it’s used and then hoping you set it all back up again ok.

Instead, this is what I’ve done:

- Add a new linked service for ADLS2

- Modify the JSON for the existing dataset to change the location property type from AzureBlobStorageLocation to AzureBlobFSLocation and change the referenceName to the new linked service

- Delete the previous storage linked service.

And that should all just work without a bunch of reconfiguring.

I hope that helps someone.

2024-02-07